In April 2026, developer Ayush Jaipuriar shared a frustrating test on X: using DeepSeek V4 Pro for a complex task took 2 hours, while switching to Codex 5.5 in normal mode completed the same task in just 20 minutes.

This is a 6x speed difference. Another developer, Wang Boyuan, also mentioned that problems he struggled with for half a day were resolved in one try with Codex.

The question arises: DeepSeek V4 boasts a million-token long context and is priced attractively (0.15 yuan for 50,000 tokens), so why does it lag behind the more expensive Codex in practical work?

The answer lies not in model parameters but in a fundamental shift: the competition in AI programming has changed from ‘who is smarter’ to ‘who can get the job done’.

A Task Completed Six Times Faster: Developers Vote with Their Feet

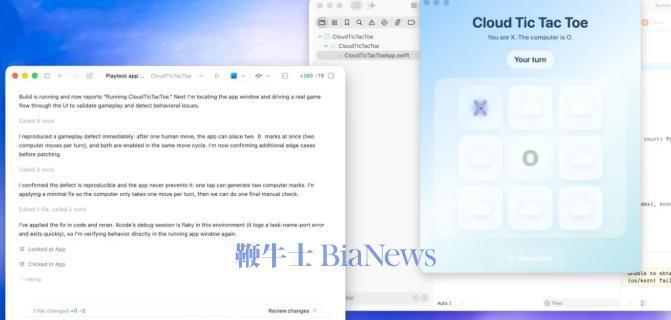

When developers talk about Codex, they are no longer referring to an AI that simply writes code. They are discussing a digital colleague that understands the entire project, can click the mouse, connect to servers, write tests, and even fix bugs.

It’s like asking someone to help renovate your house. DeepSeek V4 is an extremely intelligent designer with excellent memory (million-token context) who remembers all your requirements and produces a beautiful blueprint. In contrast, Codex is the experienced worker equipped with all the necessary tools (drills, levels, paintbrushes) who not only understands the blueprint but can also execute the tasks: building walls, laying wires, assembling furniture, and cleaning up the site.

The gap between them is the difference between ‘blueprint’ and ‘completion’.

- Task Completion Rate: Developer Chi Jianqiang faced issues with Claude Code twice, but Codex resolved it on the first attempt.

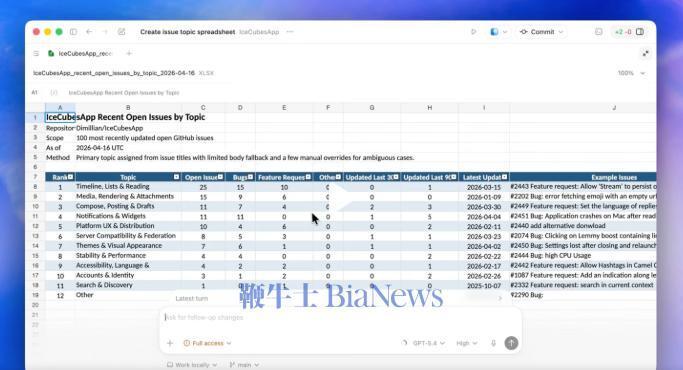

- Full Process Coverage: Codex can perform tasks such as “implementing an RBAC permission module,” “identifying and fixing all null pointer exceptions,” “upgrading the project from Spring Boot 2.7 to 3.2,” and even “generating API documentation for the entire project.” This covers the entire software lifecycle from design, coding, testing to deployment.

- Execution Environment: Codex can run on your local terminal, connect to remote servers via SSH, and operate browsers for front-end debugging. It has added over 90 official plugins, deeply integrating with tools like GitHub and CI/CD that developers use daily.

While DeepSeek V4 performs well in code generation quality (scoring 8/10 in tests with clear structure), it fundamentally remains a model that requires being “fed” and “directed.”

A developer named Vladimir noted after using over 14 million tokens that V4’s biggest issue is frequently ignoring critical engineering constraint files (like AGENTS.md), necessitating external frameworks to enforce tool calls.

Codex’s ‘Engineering Brain’: More Than Just Smart, It’s Reliable

Codex’s ability to become that “experienced worker” lies in its construction of a sophisticated engineering collaboration system, rather than solely relying on the model’s “brilliant insights.”

First, it has a ‘project manager’ to keep track of key points.

In long-chain development, conversation history accumulates like a snowball. Codex has an intelligent context compression mechanism built-in. It doesn’t clumsily discard early conversations but actively extracts the project’s core objectives, to-dos, and key decisions, generating a concise “project progress summary” to replace lengthy original records.

This ensures that the AI remains focused on the most critical tasks and does not get lost in details.

Second, it makes errors ‘reversible.’

Everyone makes mistakes, including AI. Codex implements an undo function through ghost snapshots technology. This means if the AI makes an error in one step, you can revert to the state before the operation with one click, rather than getting deeper into faulty code.

Third, it understands ‘collaborative division of labor.’

Heavy users have found that the most effective approach is not to let one Codex session handle everything. A better model is to have a main AI responsible for planning and breaking down tasks, while Codex acts as a dedicated executor, handling clearly defined small tasks like “implementing this API interface” or “fixing this bug.” This way, even if a certain link is interrupted, the loss is localized and does not derail the entire project.

This system transforms Codex from an “occasionally impressive tool” into a “consistently reliable partner.” Its value lies not in how many lines of code it generates but in significantly shortening the distance between ‘idea’ and ‘operational software.’

V4’s Shortcomings: When the ‘Top Student’ Joins the ‘Construction Crew’

DeepSeek V4’s dilemma stems from its status as a product of “model thinking” that struggles to perform fully in a battlefield requiring “engineering thinking.”

Li Bojie, chief scientist at Pine AI, pointed out the key issue: V4 still has significant flaws in stability of tool calls and control of hallucination rates. In long-chain tasks, a small error can be magnified, ultimately leading to task failure. Therefore, it must rely on external architectures (Harness layer) to supplement its capabilities like verification, retries, and knowledge base queries.

This is akin to providing a top student with a secretary team to constantly check their homework, correct typos, and look up information to ensure stable output. In contrast, Codex has this “secretary team” built-in.

Industry trends are changing rapidly. Zheng Weimin, an academician of the Chinese Academy of Engineering, noted that China’s AI token consumption has surged from an average of 100 billion per day in 2024 to 140 trillion per day by 2026. The focus of competition is shifting from MaaS (Model as a Service) to TaaS (Token as a Service)—competing on how much actual value can be produced per token consumed.

Under this new standard, although DeepSeek V4 has a low single-token price, Codex solves more, more stably, and more completely per token. Developers consider the “total cost and time to complete a project,” not “how much it costs to call an API once.”

The Second Half of Competition: Engineering Capability Determines Survival

The release of DeepSeek V4, with its extreme cost performance, has ended the era of high premiums for models, marking a victory in itself. However, it also serves as a mirror reflecting the real landscape of current AI competition: the gap in model capabilities is narrowing, while the gap in engineering capabilities is determining market stratification.

Domestic manufacturers have recognized this and are racing to catch up:

- Organizational Reform: Alibaba has made two adjustments within 23 days, establishing a business group and technical committee focused on token efficiency, breaking down departmental walls to achieve full-link collaboration in computing power, models, and applications.

- Tool Matrix: Alibaba, Tencent, and ByteDance are intensively releasing their own coding agents (like Qoder, WorkBuddy) and Skill platforms, attempting to build their own development ecosystems.

- Computing Power Binding: DeepSeek V4 has for the first time included Huawei Ascend chips in its official verification list, priced at only 1/4 of NVIDIA chips, aiming to create a high cost-performance “national model + national chip” solution.

Thus, the fact that V4’s million tokens cannot compete with Codex is not a technical failure but a signal of the times. It tells us that AI is penetrating beyond the dazzling surface of technology into the deep waters of industrial applications. Here, stability, reliability, and the ability to integrate into existing workflows are more significant than any single technical metric.

The future winner may not be the one with the smartest model but certainly the one who understands how to transform intelligence into productivity.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.