Introduction

As AI tools evolve from standalone applications to workflow hubs, Anthropic is reshaping developer productivity with Claude Code. From mandatory identity verification to pay-per-use billing, and from Routines workflows to cloud-based Connectors, this seemingly iterative upgrade hides a systemic struggle for user control. This article will dissect the product philosophy and strategic intentions behind this ’tool integration war'.

The Cost of AI Tools

Recently, I calculated my monthly expenses on AI tools. After checking my subscription management page, I found it averaged around $200. Conversations with friends in AI product development revealed a similar range of $100 to $300, with most unaware of their total spending.

According to the 2026 SaaS Management Report, heavy AI users spend between $100 and $200 monthly on AI tools, with many never tracking this total. Moreover, the ‘integration tax’—the cost of maintaining integrations, data migration, and the learning curve—accounts for 25% to 40% of total AI spending.

In other words, for every dollar spent on AI tools, 40 cents goes toward coordinating different tools. This fatigue has led to a growing sentiment in the industry:

Truly great AI products don’t just add another tool to the toolbox; they throw the toolbox away and replace it with a fully automated workshop.

On April 14, 2026, Anthropic launched three significant updates:

- A redesigned Claude Code desktop application (Claude Code CLI v2.1.108 released; followed by v2.1.109 on April 15)

- The official launch of the Routines feature (in Research Preview)

- An update to the identity verification policy, requiring mandatory verification in certain scenarios; a major overhaul of the enterprise billing model

Most tech media reported these as three separate news items. However, I view them as three critical moves in the same game.

What is the endgame?

Systematically reducing the reasons to leave Claude while increasing the costs of leaving.

Desktop Reconstruction: Changing the Mindset

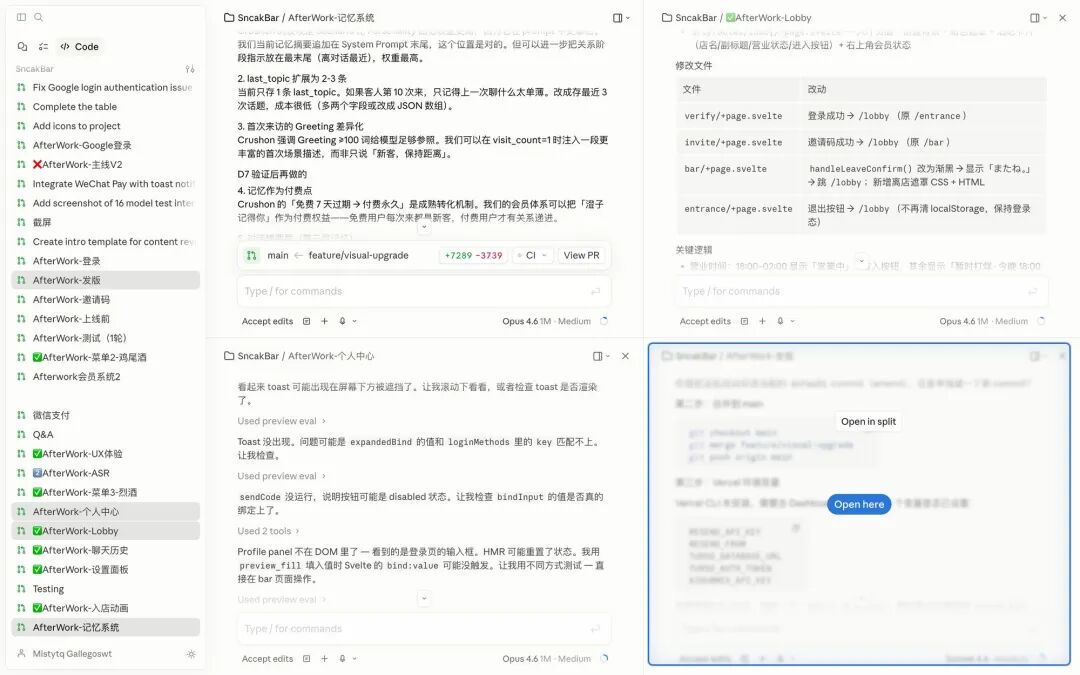

The new Claude Code desktop application released on April 14, 2026, includes several key changes:

- Multi-dialogue side-by-side: Run multiple Claude Code sessions in one window, managed via a left sidebar with a drag-and-drop layout.

- Built-in terminal: No need to switch to iTerm.

- Built-in file editor: No need to switch to VS Code.

- HTML/PDF preview: No need to open a browser.

- Quick diff viewer: Compare changes like in Git.

Each of these features is a useful upgrade. However, together they reveal a crucial point:

Anthropic is systematically cutting off every action that could lead users away from Claude Code.

On the surface, this integrates common code editor functionalities into Claude Code.

In essence, this shifts a person’s mental model of “AI tools.”

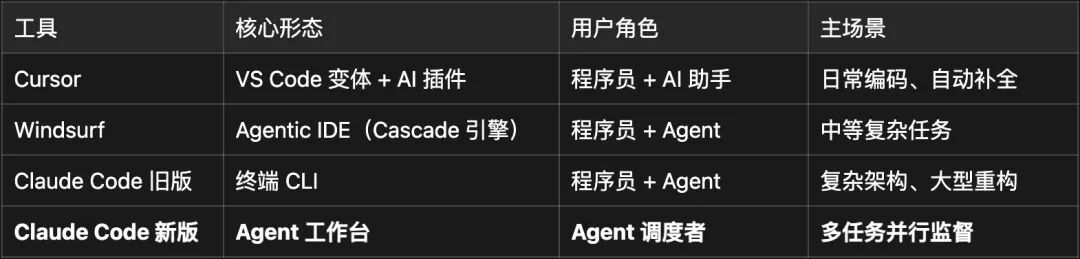

Comparing the forms of mainstream AI programming tools in 2026:

The differences are clear. Other tools aim to be “better AI editors,” while Claude Code is creating something entirely different:

A workspace that allows users to manage multiple AI agents like project managers.

No longer is it about sitting in an IDE and “calling on AI for help,” but rather sitting at a workspace and “dispatching multiple AIs to do the work for them.”

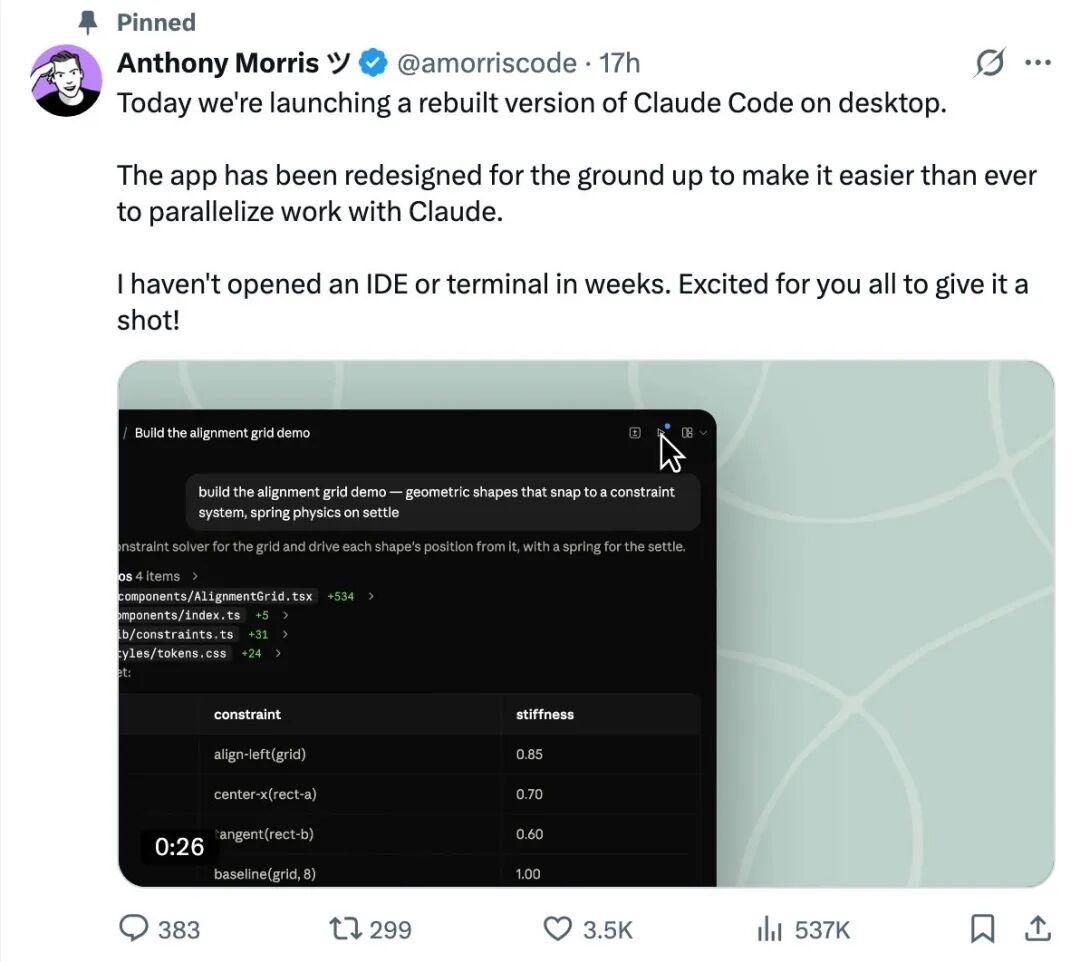

Claude Code’s desktop lead, Anthony Morris, recently tweeted a noteworthy statement:

“I haven’t opened a terminal, code editor, or IDE in weeks.”

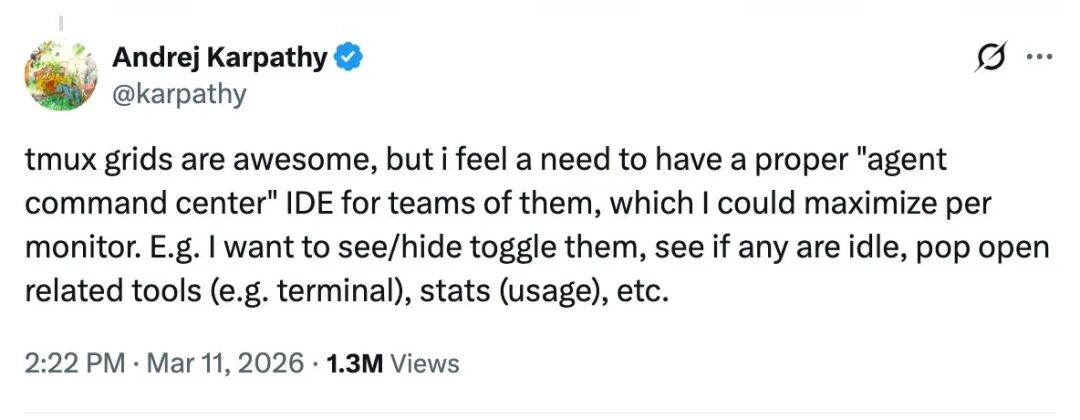

This is not just marketing language. Earlier, Andrej Karpathy made a similar observation:

The basic unit of processing in traditional programming software is changing; files are no longer the fundamental unit—agents are.

I don’t want to label this as an “OS-level platform” exaggeration. A more accurate description would be:

Anthropic is transforming Claude Code from “a tool that needs to be actively called” into “an execution platform that continuously resides within.”

The shift from “calling AI” to “dispatching AI” is just a single word difference, but it represents a complete change in mindset.

And this step is just the appetizer. The real game-changer is in the next section.

Routines: The Trojan Horse of “Integration Control” Theft

The Apparent Fully Automated Money Printer

First, let’s define Routines simply:

It is a configurable automation workflow combining Prompts, a code repository, and Connectors, running on Anthropic’s cloud without relying on local devices.

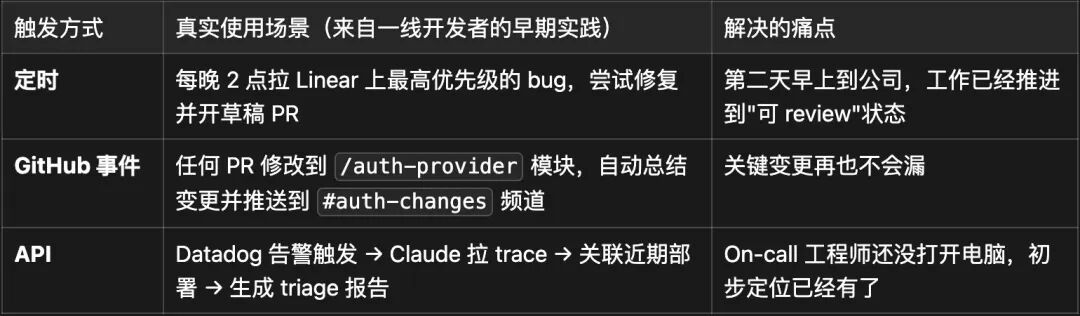

Once configured, it can be triggered in three ways:

Currently, Routines is in the Research Preview phase and is only available to paying users with daily limits:

- Pro: 5 times/day

- Max: 15 times/day

- Team & Enterprise: 25 times/day

Honestly, as someone who has been anticipating this, my first reaction upon seeing Routines was—“I’ve really been waiting for this for a long time.”

For developers bogged down by trivial tasks, it’s like a dream come true—“cloud-based labor.”

However, the real product analysis begins in the next section.

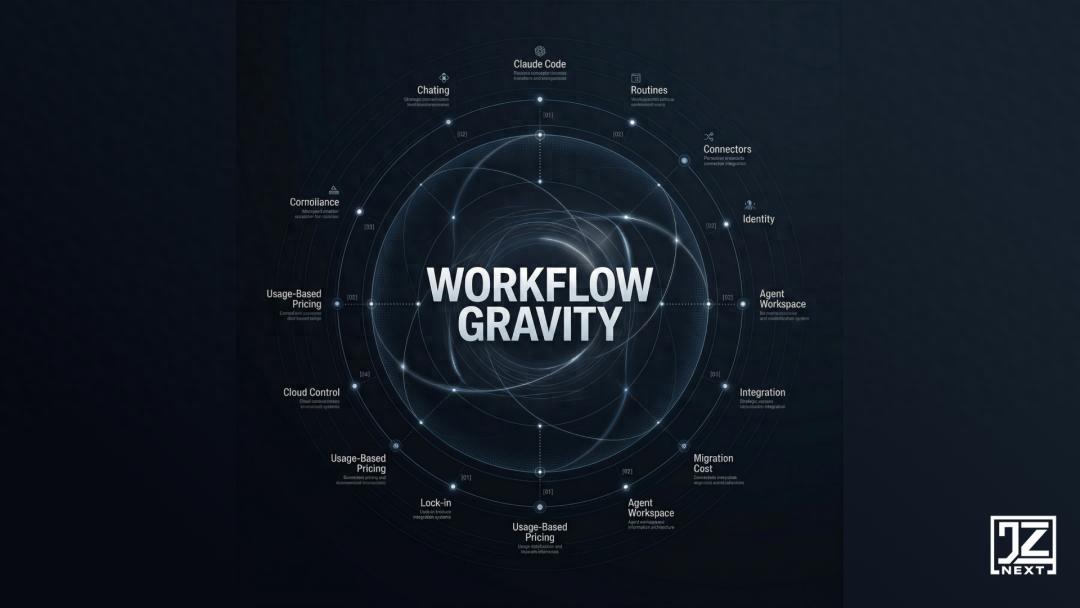

The Hidden Killer: Workflow Gravity

Most secondary interpretations miss a key description from the official documentation:

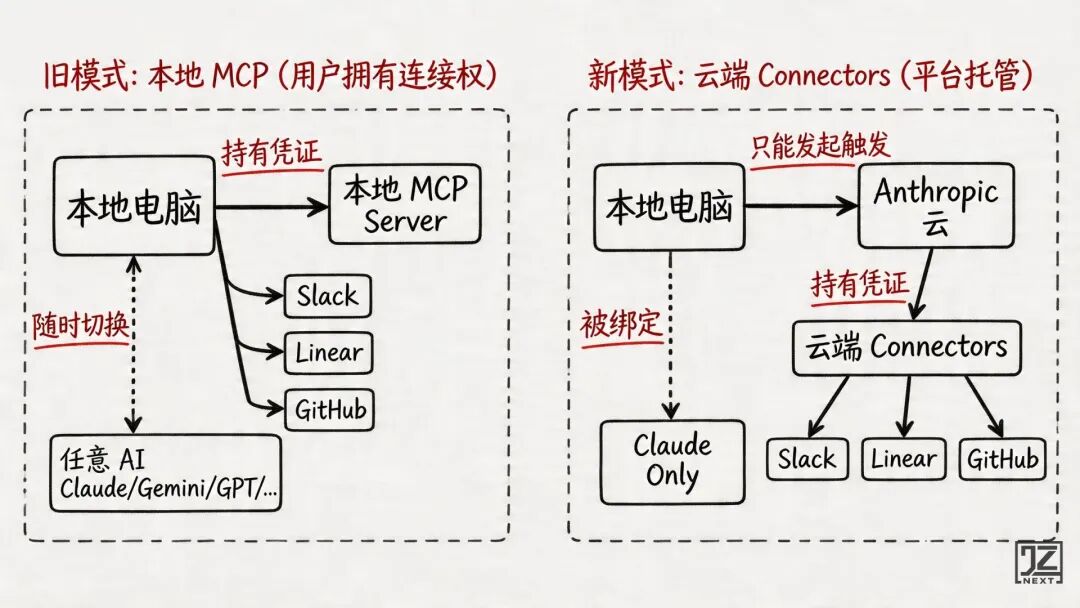

“Routines use connectors (Anthropic’s cloud-hosted MCP integrations) rather than locally-running MCP servers.”

Routines require the use of Anthropic’s cloud-hosted Connectors instead of local MCP servers.

What does this mean?

It means that once a Routine is configured to “connect Slack + connect Linear + connect GitHub,” the Slack token, Linear API key, and GitHub permissions are all stored in Anthropic’s cloud.

On the surface, this seems convenient. But when you calculate the costs, you realize:

If you ever want to switch to another AI (Gemini, Mistral, open-source models), not only do you need to rewrite all Prompts and migrate all knowledge bases, but you also have to rebuild all integrations: reapply for each tool’s OAuth, reconfigure each connection, and troubleshoot each data flow.

This is a migration cost so high that it may lead you to abandon the idea altogether.

Here, I want to introduce a new concept:

Traditional SaaS locks users in with “data gravity”; in the AI era, Anthropic is creating “workflow gravity.”

Data gravity prevents users from “moving data”; workflow gravity prevents users from “moving the automated processes running daily.”

The latter is more frightening because it binds not just static files but every operational nerve in the company.

Anthropic achieves this not by doing something “bad” but by precisely shifting the “ownership of integration” from the user side to the platform side.

The MCP protocol is open, but the implementation of the Connectors is closed.

An open standard with a closed implementation—this has been a top-tier strategy repeatedly validated in the software industry.

The ownership of integration has quietly shifted from the left side to the right side.

Thus, I see Routines as:

On the surface, a digital laborer that does work for you; at its core, a Trojan horse that confiscates “integration control.”

The convenience is real, but the price is: it becomes increasingly difficult to leave.

I’m not saying this is a trap.

It’s a good product, I will use it and recommend it to others.

However, one must understand what kind of contract this entails before signing.

The Other Side of the Coin: The Battle for Computing Power and Pricing Collapse

The first two sections discussed how Claude is working to make users want to use it more.

The next section discusses how Claude is simultaneously making the cost of using it increasingly high.

These two aspects must be viewed together because they are two sides of the same decision.

Identity Verification: Not Compliance, But Anti-Theft

On April 14, 2026, Anthropic updated its identity verification policy in the help center, stating that users would be required to verify their identity in “certain usage scenarios.”

The official reason given is that “powerful technology must be used responsibly.”

Many interpreted this as a “real-name system targeting Chinese users.”

This interpretation is directionally correct but lacks depth.

The true motivation traces back two months earlier.

In February 2026, Anthropic disclosed astonishing data:

Three Chinese AI companies: DeepSeek, Moonshot AI, MiniMax used approximately 24,000 fake accounts to launch over 16 million queries against Claude, attempting to industrialize the replication of Claude’s capabilities.

This is not ordinary abuse; it’s a battle for model capability theft.

For Anthropic, every stolen capability translates to real monetary costs in computing power.

Thus, the true motivation for identity verification is anti-theft, not merely compliance.

It is a proactive defense:

Raising the registration threshold from “just an email” to “you must first disclose who you are.”

This motivation is entirely understandable. However, it has a cruel side effect:

Ordinary users, especially those in restricted areas like mainland China, will also be caught in this net.

Identity verification does not distinguish between “well-meaning Chinese developers” and “fake account scripts.”

It uses a broad net, and ordinary people just happen to be on the wrong side of it.

Pricing Model Collapse and Reconstruction

Next, let’s look at pricing.

Anthropic recently adjusted its enterprise pricing model:

No longer primarily charging by “seat count,” but rather a base fee of $20 per user per month + billing based on actual AI usage.

This change significantly impacts some large enterprise clients—doubling or even tripling their costs.

Uber’s CTO, Praveen Neppalli Naga, recently revealed a shocking figure:

Uber has already exhausted its entire AI budget for 2026 in just a few months.

The core reason is the surge in AI programming tool usage, especially Claude Code.

This has taught the product community a harsh lesson:

The per-user pricing model of SaaS 1.0 is suicidal in the face of a computing power black hole.

Traditional SaaS costs are marginally decreasing: adding another user incurs almost no cost, while revenue grows linearly.

However, AI costs are marginally linear or even exponential: adding one heavy user requires burning a lot of inference computing power.

Under this cost structure, an AI company charging per user will be directly drained to bankruptcy by heavy users.

Anthropic must revise its pricing structure, or it cannot survive.

The Truth Behind the Combination Punch

Now, let’s look at the three components together:

Identity verification + pay-per-use billing + daily quota limits in Research Preview

On the surface, it appears that Claude is raising prices, tightening security, and imposing quotas.

However, at a deeper level, Anthropic is doing something more important:

Filtering out “worthy users” to serve.

- Identity verification filters out: freeloaders, gray area accounts, model theft scripts.

- Pay-per-use billing filters out: abusers who could bankrupt Anthropic.

- Quota limits guide: leaving limited quotas for genuinely valuable tasks.

What remains are those willing to pay for value, capable of bearing pay-per-use costs, and transparent in identity.

This is a proactive “user pool reshuffle.”

Anthropic is no longer pursuing “maximizing user numbers”; it is pursuing another goal:

Every user that remains must be worthy of being served.

For ordinary users in mainland China, this means an unavoidable reality:

They are likely not on the list of “worthy users” that Anthropic recognizes—at least not officially.

They can continue to use it, but they face an increasingly unwelcome platform.

My Four Principles

The product analysis ends here, and the remaining question is: what to do next?

In fact, each AI PM’s answer to this game will differ.

A Premise: The Dependency on AI Begins to Have “Structural Risks”

Let’s outline a timeline:

Before 2024: AI is a “feature"—whether to add an AI button to a product is an option. 2024-2025: AI is a “tool"—it starts being called upon in daily work but isn’t essential. 2026: AI is “infrastructure”—dependency on it begins to have “structural risks.”

“Structural risk” is not an exaggerated term. It means:

Once supply is cut off, workflows will crack open a hole that cannot be reconstructed.

Vendor lock-in has been a recurring topic in the CTO community; it has a unique aspect compared to traditional SaaS lock-in:

Traditional SaaS lock-in primarily revolves around “data format” and “integration.” AI lock-in is multi-layered: model APIs, prompt engineering results, fine-tuning weights, embedding vector formats, knowledge base structures, integration credentials…

These elements cannot be cleanly migrated to another platform like a CSV file.

Realizing this, I began managing my use of Claude as if it were an “infrastructure dependency.”

My Asset Risk Matrix

I categorized all my “assets” embedded in Claude by migration difficulty:

This table answers a specific question: How long would it take to rebuild each asset if Claude were to cut off supply?

Four Principles

Principle One: Core Assets Must Be Localized

Prompts, skills, and knowledge bases used for essential tasks must have an original copy in Git or Obsidian.

Here, the term “original” is significant—the local copy is the original; the one in Claude is merely a duplicate.

Ownership is always strongest locally.

Principle Two: Beware of High-Risk Assets Bound to the Cloud

Cloud configurations like Routines + Connectors that cannot export connection relationships are high migration difficulty assets.

My approach is tiered:

- Tasks like log organization and issue tagging—feel free to put them up.

- Tasks involving core business flows and critical cross-system integrations—be cautious about relinquishing control.

Principle Three: Before Enabling New Features, Ask One Question

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.